KEY POINTS

● A “scholar” who insists there is little or no racial bias in child welfare writes a “predictive analytics” algorithm for the State of California. Somehow, the contract to write a so-called “independent ethics review” of the algorithm is given to another “scholar” who also insists there is little or no racial bias in child welfare. In fact, they co-authored a screed on this very topic.

● So we shouldn’t be surprised when the ethics review says it’s just fine if an algorithm says a parent is more likely to abuse a child based solely on the parent’s race.

● A document from the California Department of Social Services indicates the agency was appropriately appalled by the “ethics review.” They scrapped the entire algorithm project.

● But it looks like the same ethics review is about to be used to justify still another algorithm created by the same team as the one the state scrapped. This latest algorithm already is being rolled out, very quietly, in Los Angeles.

●

This is only the latest in a long line of predictive analytics “ethics reviews”

that raise ethical questions.

In the history of efforts to target families for child abuse investigations or otherwise harass them by using “predictive analytics” algorithms, also called Predictive Risk Modeling (PRM), one of the more spectacular failures was a system called AURA. A product of a commercial software company, and a pet project of the then head of the Los Angeles family policing agency, Philip Browning, it was stopped during the testing phase after it was found to have one little flaw: 95% of the time, when the algorithm predicted something terrible would happen to a child, it didn’t.

After that, California decided to try its hand at building a child welfare algorithm which could be used for a variety of purposes by the state and by county family policing agencies. (In California, family police agencies are county-run.) They gave the job to a team led by Prof. Emily Putnam-Hornstein, the nation’s foremost predictive analytics evangelist. She is co-author of two algorithms used in Allegheny County, Pa. (metropolitan Pittsburgh). One of those algorithms attempts to stamp an invisible “scarlet number” risk score on every child at birth. Putnam-Hornstein’s own extremism is well-documented.

As far as I knew, the California algorithm was still in development.

It’s not.

An excellent story in The Imprint reveals that, after extensive consultation with an impressive and diverse group of people, the California Department of Social Services (CDSS) has pulled the plug.

CDSS concluded that Putnam-Hornstein’s team managed to deliver the worst of both worlds: an algorithm that was overlooking real safety threats and risked worsening racial bias in California child welfare.

It appears that an “independent ethics review” written in 2018 that claimed there were no ethical problems at all may have backfired. It may have contributed to alarm in CDSS and contributed to the agency’s wise decision to scrap the algorithm project.

Unfortunately, the Los Angeles County Board of Supervisors and the county family policing agency, the Department of Children and Family Services, learned nothing from this. They commissioned Putnam-Hornstein’s group to write an algorithm to, among other things, flag “complex risk” cases. Then, as the Imprint story makes clear, they sneaked it online for three months in three regional DCFS offices, with no opportunity for the community to object – presumably because the community might point out the track record of predictive analytics and of Putnam-Hornstein.

When you go to the web page where Hornstein’s group promotes the L.A. algorithm and click on “ethics review” what do you find? The same appalling ethical review submitted to the state – with a promise that it will be “updated.”

So let’s take a close look at that document, and who wrote it.

Race as a risk factor

Algorithms are not objective. Human beings decide what factors an algorithm will consider when it coughs up a “risk score” concerning a child or family. That risk score may determine anything from whether a family receives “preventive services” to whether a child is removed from the home. Predictive analytics evangelists hate it when critics say “determine” – they say the scores are merely advice to give us humans a little help. But imagine what would happen if it became known that a human ignored a high risk score and something went tragically wrong. So while it is usually, though not always, possible for a human to override the algorithm it’s not likely to happen very often.

Most of the time, designers of predictive analytics algorithms try to duck charges of racism by not explicitly including race as a factor. Instead, they use lots and lots of criteria related to poverty – is the family on public assistance? Was the family homeless? Are they on Medicaid? etc. – that disproportionately include families of color.

But that wasn’t enough for the authors of the California ethics review. They went out of their way, apparently without even being asked, to say, in effect: It’s just fine if you want to say children are at higher risk of abuse for no other reason than the race of their parents!

This is fine, the authors say, because it will make the algorithm more accurate. But their measure of accuracy is not whether the algorithm predicts actual child abuse, it’s whether the algorithm predicts future involvement in the family policing system: Did the algorithm correctly predict that the family would be labeled “substantiated” child abusers or the child would be placed in foster care?

But that’s not a prediction, that’s a self-fulfilling prophecy. For example, in a system permeated with racial bias, Black families are more likely to be reported, more likely to be substantiated and more likely to have their children placed in foster care. So when the algorithm looks at which families are more likely to be “system involved” the algorithm concludes that being Black makes you more likely to be a “child abuser” – instead of concluding that being Black makes you more likely to be falsely reported, wrongly substantiated and needlessly have your children taken.

|

Or, as CDSS put it in a 2019 memo sharply critical of the ethics review

CDSS does not agree that it is ethical to include race in the algorithm. Removing race from the algorithm does not eliminate bias, since race is correlated with many variables. However, explicitly including race as a variable simply provides an opportunity for more bias to seep into the algorithm’s predictions.

Go ahead: Slap a risk score on every child!

The section on race only hints at the extremism of the ethics review’s authors.

California never asked for an algorithm that would be applied to every child at birth – an Orwellian concept known as “universal-level risk stratification.” It is so extreme one might think no one would ever do that – except that Putnam-Hornstein co-designed an algorithm that attempts to do just that in Pittsburgh.

But even though this wasn’t on the table, the California “ethics review” went out of its way to say, in effect: By the way, that would be fine, too!

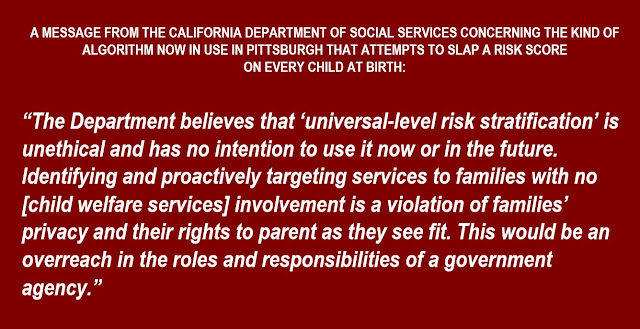

The California DSS appeared to be appalled, and said so, sending a message that everyone in America, but especially in Pittsburgh, needs to hear:

The Department believes that “universal-level risk stratification” is unethical and has no intention to use it now or in the future. Identifying and proactively targeting services to families with no [child welfare services] involvement is a violation of families’ privacy and their rights to parent as they see fit. This would be an overreach in the roles and responsibilities of a government agency.

No wonder California DSS seemed appalled. They were handed a so-called ethics review that says it’s fine to slap a risk score on every child at birth – and fine to label the child at higher risk of abuse just because of the parents’ race.

The Charge of the White Brigade

Who in the world would take such an extreme position? Someone who is a charter member of child welfare’s “caucus of denial” – someone who insists that child welfare is magically immune from the racial bias that permeates every other aspect of American life. Someone who says that the disproportionate rate at which the system intervenes in Black and Native American families is only because past discrimination (racism is so over, have you heard?) and the problems it caused has made Black people more likely to be bad parents. In short, someone with views just like those of Emily Putnam-Hornstein.

So now, meet the lead ethics reviewer for Putnam-Hornstein’s California algorithm, Prof. Brett Drake of Washington University.

Not only does Drake hold the same views on all this as Putnam-Hornstein, something he makes clear in the ethics review itself, he and Putnam-Hornstein co-authored a screed taking the same position on race, and denying even that poverty is confused with neglect. Another co-author is Sarah Font of Penn State, who issued a report with a graphic labeling everyone accused of child abuse as a “perpetrator” – even after they’ve been vindicated in the courts.

The lead author of the screed is Naomi Schaefer Riley, who proudly compares her own book attacking family preservation to the work of her fellow American Enterprise Institute “scholar” Charles Murray. Murray wrote The Bell Curve, a book maintaining that Black people are genetically inferior. The Southern Poverty Law Center labels Murray a “white nationalist extremist.”

In addition to the four authors, the screed has 13 co-signers. All of the authors and all but two of the cosigners appear to be white. And that’s just the beginning. I recommend taking a minute to Google them all.

This Charge of the White Brigade is nominally a critique of the movement to abolish the family policing system. The white authors label this movement led by Black scholars and Black activists “simple and misguided,” [emphasis added].

But the screed isn’t only about abolition. It recycles the same old attacks used for decades against any effort to curb the massive, unchecked power of the family police. Their argument, in somewhat more genteel language, boils down to: If you don’t let us do whatever we want, whenever we want to whomever we want, you don’t care if children die!!!

So to review: An algorithm designed by someone who believes there is little or no racial bias in child welfare gets a seal of approval from an “ethics review” written by someone who believes there is little or no racial bias in child welfare.

Though California DSS was not fooled, as noted above, Los Angeles County has turned to Putnam-Hornstein to design still another algorithm. As the Imprint story revealed, it was rolled out on the sly for three months in three locations last year. Presumably, this was done because the communities in question might object to computerized racial profiling and try to stop it in its tracks. So even as predictive analytics proponents blather about consultation and transparency their real approach is different. How ethical is that?

As noted above, go to the webpage where Putnam-Hornstein and her colleagues try to sell this latest algorithm and click on “ethical review” and guess what turns up? The same review rejected by the California Department of Social Services in 2019, but with a note promising an update.

If this latest experiment, using Los Angeles children and families as guinea pigs, is allowed to continue, presumably, Putnam-Hornstein, who does not believe there is racial bias in child welfare, will be in charge of determining if this latest algorithm exacerbates racial bias in child welfare.

All of which raises one question: How was Brett Drake chosen to do the California ethics review in the first place? It does not appear that he was chosen by California DSS. They say that the ethics review

was a required deliverable for a research grant that supported exploration of whether a [predictive risk modeling] tool could successfully assess risk of future [child welfare services] involvement.

If, when it comes to ethics reviewers Putnam-Hornstein has had a remarkable run of luck.

A co-author of an ethics review of the Allegheny Family Screening Tool (AFST), the first algorithm Putnam-Hornstein co-authored for Pittsburgh is a faculty colleague of Putnam-Hornstein’s partner in creating that algorithm. They coauthored papers together. But even those reviewers said that one reason AFST was ethical is that it would not be used on every child at birth.

Needless to say, this caused a problem when Allegheny County decided it wanted to use another algorithm co-authored by Putnam-Hornstein to do just that. So they commissioned two more ethics reviews – including one from another ideological soulmate of Putnam-Hornstein.

But oddly, the county didn’t rush to publish them. In 2019, county officials just declared that they’d gotten a seal of approval from the ethics reviewers. That is true. But the story is a little more complex than that. It’s a story for part two, which you can read here.